|

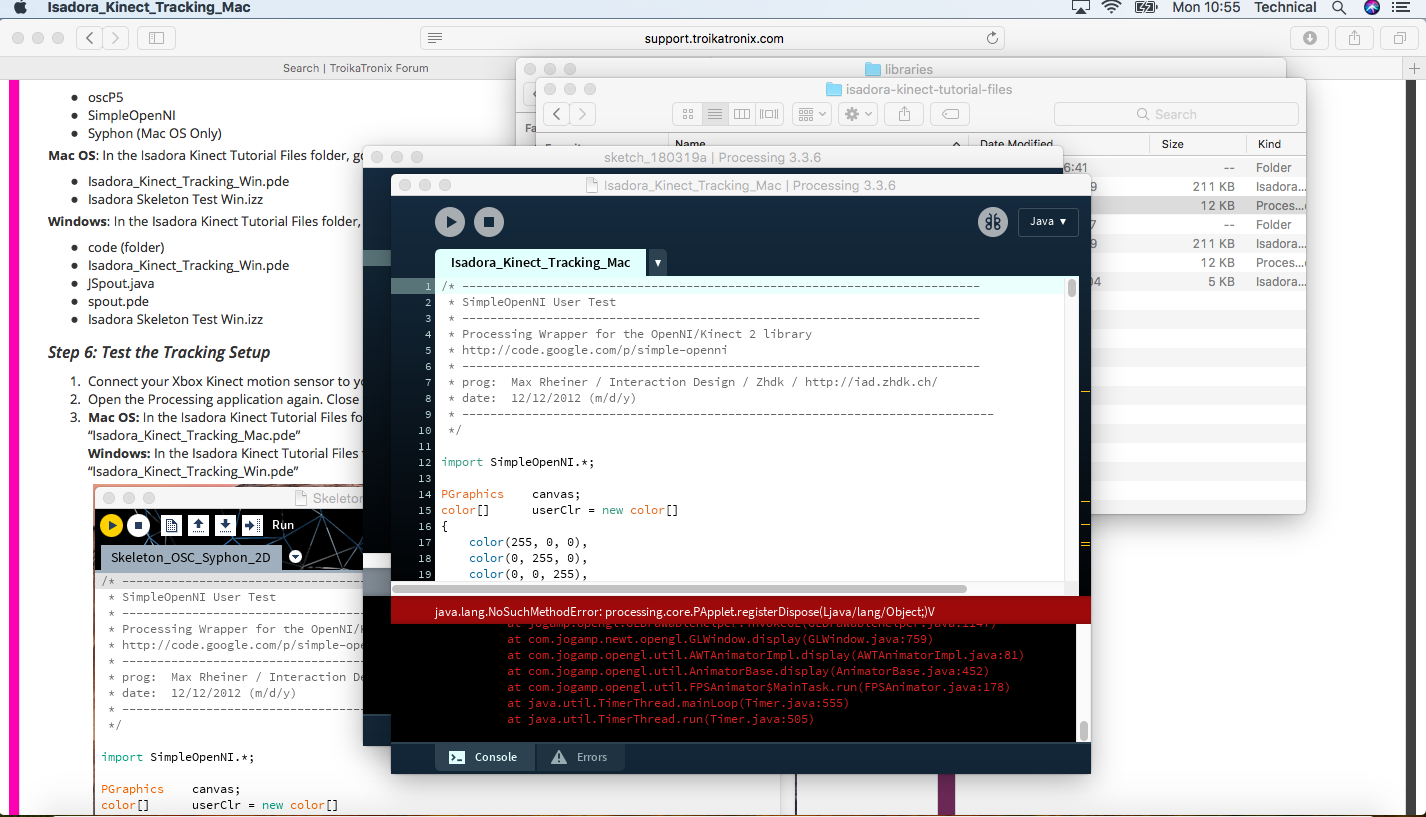

Its unstable, but at least you can get a depth. Share.1 answer Top answer: EDIT 2: Working code:This branch already contains a subset of the changes I was making, and actually runs. Running on a Macbook Pro OSX Lion late 2011. Also, before you say to plug in the 12V DC adapter or switch the USB port, I have already done so.

Kinect Software Software Is DownloadedOpenKinect is an open community of people interested in making use of the amazing Xbox Kinect hardware with our PCs and other devices. Nefertiti Bust (and Controversy Ensues) CorelCAD 2021 (Windows/Mac) CAD software.If Java and Open GL the minecraft prototype might work to run it in stereoAbout. Ive watched every video on YouTube , factory reset my windows in 10 tablet PC as well as purchased a new Xbox 360 Kinect camera with still no results.Read on for our selection of the best Kinect 3D scanning software. Once the Kinect software is downloaded I am unable be to open it or have the software recognize the Kinect camera. Note: This app was exported as a Mac version.Problem: We have a Kinect+Minecraft prototype but no code to calibrate it for a curved or cylindrical screen.I have downloaded the Kinect 1.8 SDK and the Kinect tool kit.However I have been reminded not to use the word warp for this, true, it is adjusting the camera for a half-cylindrical screen:And there is still projection mapping to be considered! Like But only in Open GL.Combining that with stereo may pose more challenges but even reconfigurable surface warping would be a great start. Youll have access to the EarthCache API to collect analysis-ready data.I have just been told our version uses Java, One good bit of news for the day!My hunch is the Open GL code from Charles Henden‘s project )Will allow us to run a Minecraft mod on a curved (or even asymmetrical) screen. The OpenKinect community consists of over 2000 members contributing their time and code to the Project.What is the current version of Minecraft? Java (OpenGL) or Minecraft Win10 (Pocket edition) Direct X 12?The simplest way to integrate commercial satellite imagery into any application. Narrator gestures affect the attention or behavior of the avatar.How Xbox Kinect camera tracking could change the simulated world or digital objects in that world: Avatars role-play – different avatars see different things in the digital world. Avatars change to reflect people picking up things. Avatars in the simulated world change their size clothing or inventories – they scale relative to typical sizes and shapes of the typical inhabitants, or scale is dependent on the scene or avatar character chosen. Projection Mapping Minecraft: Mining in the Real WorldI did not mention all these in my 22 May presentation at Digital Heritage 3D conference in Aarhus ( )But here are some working notes for future development:How Xbox Kinect camera tracking could change the simulated avatar: Google drive app for mac computerThrough the use of motion tracking and gestures being tracked by a camera sensor, presenters can provide a more engaging experience to their audience, as they won’t have to rely on prepared static media, timing, or a mouse. Levels of authenticity and time layers can be controlled or are passively / indirectly affected by narrator motion or audience motion / volume / infrared output.My abstract for 21 May talk at the Digital Heritage 3D representation conference at Moesgaard Museum Aarhus DenmarkTitle: Motion Control For Remote Archaeological PresentationsDisplaying research data between archaeologists or to the general public is usually through linear presentations, timed or stepped through by a presenter. Interfaces for Skype and Google hangout – remote audiences can select part of the screen and filter scenes or wire-frame the main model. Objects move depending on the biofeedback of the audience or the presenter. Screenshot of prototype and pointing mechanism at the HIVE, Curtin University.We have developed an experimental version of FAAST with support for the Kinect for Windows v2, available for download here (64-bit only). Screenshot of stereo curved screen at the HIVE, Curtin University.Figure 2. An alternative is for a character inside the digital environment mirroring the body gestures of the presenter where the virtual character points will trigger slides or other media that relates to the highlighted 3D objects in the digital scene.Acknowledgement: I would like to thank iVEC summer intern Samuel Warnock for kicking off the prototype development for me and Zigfu for allowing us access to their SDK.Figure 1. VAC uses a unique method in phrase recognition which greatly reduces unwanted issued commands caused by ambient noises.As an alternative to Kinect One (which requires Windows 8) the PS4 eye camera has some interesting features/functions although it is lower res and does not have an Infra Red (IR) blaster.And can cost $85 AUD or cheaper online or via JB hifi…How does it compare to Microsoft’s Kinect One camera?One possible future use for PS4 eye camera, the VR Project Morpheus: Use your voice to speak words or phrases to issue commands to you favorite games. Since you have your hands full while playing those busy games you can now put your voice to work for you. This is based on preliminary software and/or hardware, subject to change.FAAST is currently available for Windows only.The VAC system is a useful program which you use to issue commands to your flight simulator , role playing game or any program. programmable controls for frame rate, mirror and flip, standard serial SCCB interface cropping and windowing automatic black level calibration (ABLC) support 2×2 binning one of the cameras can be used for generating the video image, with the other used for motion tracking.Features (Could be used for eye tracking.) pad, move, face, head and hand recognition/tracking supports images sizes: 1280×800, 640×400, and 320×200“Bigboss has successfully streamed video data from the PS4 camera to OS X!”“It is the first public driver for PlayStation 4 Camera licensed under gpl.”We are trying to create some applications/extensions that allow people to interact naturally with 3D built environments on a desktop by pointing at or walking up to objects in the digital environment:Or a large surround screen (figure below is of the Curtin HIVE):Using a Kinect (SDK 1 or 2) for tracking. supports horizontal and vertical sub-sampling programmable I/O drive capability supports output formats: 8/10/12-bit RAW RGB on-chip phase lock loop (PLL)(MIPI/LVDS) embedded 256 bits one-time programmable (OTP) Better employ the curved screen so that participants can communicate with each other.We can have a virtual/tracked hand point to objects creating an interactive slide presentation to the side of the Unity environment. Possibly also use Leap SDK (improved). Trigger slides and movies inside a UNITY environment via speaker finger-pointing Ideally the speaker could also change the chronology of built scene with gestures (or voice), could alter components or aspects of buildings, move or replace parts or components of the environment. Control an avatar in the virtual environment using speaker’s gestures. Green screen narrator into a 3D environment (background removal).

0 Comments

Leave a Reply. |

AuthorMeron ArchivesCategories |

RSS Feed

RSS Feed